Sustainability and public interest

“The image of AI as a tool is far too simple”

Theresa Züger in an interview with Jörg Döbereiner

You developed a method that should help determine whether a certain AI project is essentially beneficial or harmful. What criteria do you think make sense in this context?

Our criteria are based on universal human rights and the UN Sustainable Development Goals (SDGs). We also orient ourselves towards other standards, such as the European Union’s AI Act, one of the most comprehensive AI laws in the world. We focus on the impact of AI applications on the public interest and sustainability, both of which are well-established social concepts. We ask ourselves: When an AI project has a certain goal related to sustainability, does it reach it? What negative effects could occur? To evaluate that, we use our audit method to examine various dimensions of sustainability, such as social, economic and ecological aspects.

Before we discuss your method in more detail, let’s take a look at the context: to what extent is AI already helping to promote sustainability and the public interest?

First, I think it’s important to clarify what we mean when we say “AI”. A rough distinction can be made between generative and non-generative machine-learning models. Generative AI creates new content such as text, video or programming code on the basis of deep learning and in response to textual instructions, so-called prompts. It includes popular chatbots like ChatGPT, Claude or Gemini. Non-generative models, which are used for instance to detect patterns, have been around much longer. These models generally use smaller amounts of data than generative AI.

Non-generative models in particular are already being used in numerous projects that aim to improve sustainability and advance the public interest. For example, they help with biodiversity research by observing animals and evaluating data through pattern recognition. Or they analyse satellite imagery to improve reforestation. Other applications are designed to help reduce climate-damaging emissions, for instance in building climatisation or during the optimisation of industrial processes.

From a global perspective, however, it is difficult to say how powerful the positive impact of AI is in these fields, also because of the so-called rebound effect. These are changes that negate any savings that have been achieved. For example, if a company conserves resources in one area with the help of AI systems, but then expands elsewhere and consumes more resources, no net savings remain. Such effects can only be determined retrospectively as part of a more comprehensive evaluation.

But you can’t blame AI for that kind of rebound effect.

True. But you can’t blame AI itself for anything. It’s always a human decision to use an AI system for a particular purpose, such as conserving resources. The important thing is to look beyond short-term savings. Unfortunately, many aspects of digitalisation have shown that while savings may be possible, they often do not lead our economy to ultimately consume fewer resources. Moreover, many statements that Big Tech companies make about AI serving a sustainable transformation are not sufficiently based on evidence. At the end of the day, while AI can have positive effects, these can only be gauged for individual projects and not easily for the sum of society’s AI systems.

One of the clearly negative impacts of AI on the public interest is its use in the service of disinformation, for instance to manipulate images and videos. But you write that AI has problematic aspects even when it is used for ostensibly “good” reasons. What do you mean by that?

This point is not meant to question whether AI systems can have positive social effects in specific scenarios. At issue is rather the fundamental dilemmas that are currently associated with the generative AI industry. Generative AI in particular tends to consume a great deal of resources, from energy to water and minerals to disposal as e-waste. Many data workers who train AI and moderate content are poorly paid, work under very precarious conditions and suffer from severe psychological stress. Other important questions relate to global justice: Which nations and industries profit the most from AI? Who is more likely to suffer from resource depletion and poor working conditions? Some researchers are already talking about new forms of colonialism.

How are advantages and disadvantages distributed in this context?

Very obviously, it is the “global majority” who is most likely to suffer: People with African, Asian, Latin American or mixed backgrounds who make up the majority of the global population. Poorer countries, where many of the data workers live but where no large AI industry is located, tend to be disadvantaged. Conversely, Western nations, and above all the USA, are profiting from the affordable labour in these countries and their ability to extract resources and export e-waste there.

We also have to be clear that the AI industry is backed by very powerful people – particularly in the USA – who are advancing anti-democratic ideologies. Elon Musk and others have declared the goal of replacing state apparatuses with automation. Since the beginning of Donald Trump’s second term, we’ve seen that this isn’t fiction but rather a scenario that is actively being pursued.

All of this doesn’t necessarily mean that we shouldn’t use AI. But we should be aware of these issues, particularly when we talk about using AI to serve the public interest. And we should consider how we can change these conditions politically or adapt our individual usage.

Should AI be seen as a tool that can be used for various purposes, beneficial as well as harmful?

The image of AI as a tool is far too simple. It conceals the enormous complexity behind it. AI is an umbrella term for very different forms of technology. These are connected to each other over a huge network of material structures, like data centres and the industrial infrastructure in which data workers operate. These technologies are also being used under very difficult social conditions that confront us with the dilemma described above when we want to employ AI to promote sustainability and the public interest.

That brings us back to the audit method you developed to evaluate AI projects. How does that work?

At first, we and our partners, Greenpeace and Gemeinwohl-Ökonomie Deutschland (Economy for the Common Good Germany), identify potential projects and hold preliminary discussions about whether an audit can take place. It’s important that an AI system already be in use and not just in the research phase. Then we request documents and conduct interviews. For example, we are interested in the goals the projects are pursuing and how these goals are evaluated. But we also take a look at the technical infrastructure and consider the ecological footprint.

We ask a total of over 200 questions, including: Do users have a way to contact a project directly? Can they criticise a project if they are impacted by an application and notice a problem? How were decisions about the system’s design made and on the basis of what arguments? This goes significantly deeper than a traditional impact assessment. We want to understand the context of the projects more precisely, and to that end we have integrated various existing methods into our model.

How do you evaluate the data?

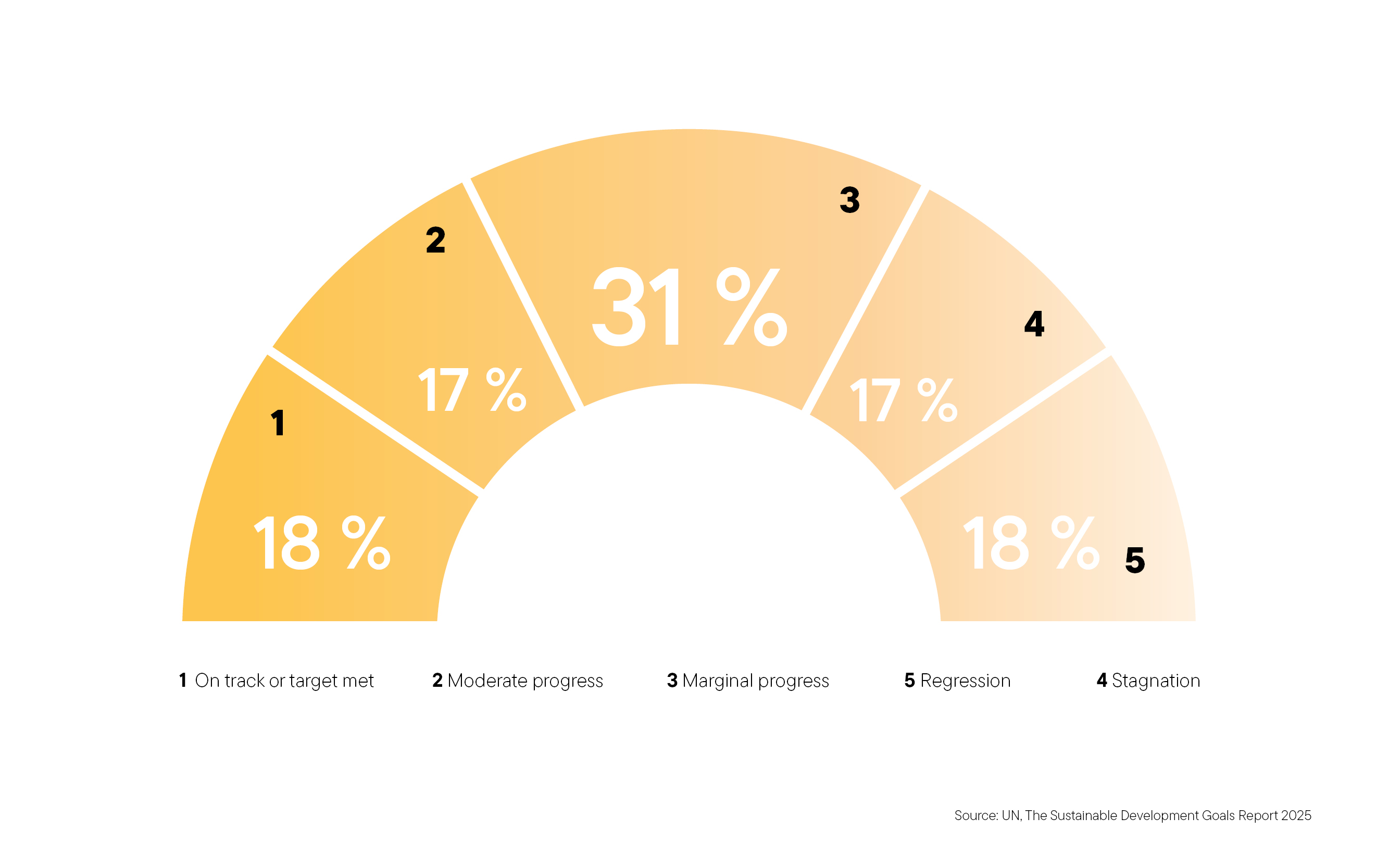

We analyse the content qualitatively and also assess metrics and numbers. Then we evaluate individual aspects of a project using a five-point scale. The criteria are inspired by those that the UN defined for its own AI projects, the UN Principles for the Ethical Use of AI. They include, for instance, the question whether a system is necessary and appropriate, or in other words whether there is a much simpler way to solve the problem at hand. Other aspects play a role too, such as security, discrimination, human oversight, transparency and data protection.

Who is your audit intended for?

So far, we have audited two projects by established NGOs in the area of AI and democracy: one on fighting disinformation and one on democratic opinion formation. The next will be in the area of administrative digitalisation. To date, we have limited ourselves to projects that aspire to contribute to greater sustainability and promote the public interest. But it would be possible to evaluate other projects using the same criteria.

Do you think leading AI companies would be interested in having their own products evaluated according to these criteria?

In the context of the global trend towards generative AI, we tend to see the opposite: sustainability and the public interest are being put on the back burner. This can even be seen in the Big Tech companies’ sustainability reports, which have revised goals that were already set. Many of the AI systems that we see being used have goals that are completely different from promoting the public interest. Often, they are focused on more consumption and ad placement; sometimes also on spreading disinformation. Control and surveillance are also areas of application in which AI development is increasingly running counter to the principles of public interest and sustainability.

But especially because of these developments, Europe has the chance and the responsibility to develop a different vision and ecosystem for AI, which could centre around an alternative democratic and sustainable future.

Link

“Impact AI” project at the Alexander von Humboldt Institute for Internet and Society

Theresa Züger is the head of research on societal values, transformation and artificial intelligence at the Alexander von Humboldt Institute for Internet and Society in Berlin.

zueger@hiig.de